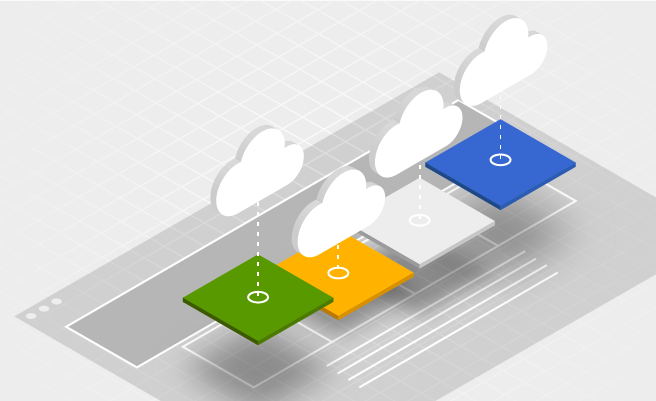

Cloud CMS: 4 ways Magnolia can be used in the cloud

Many companies are considering moving away from on-premise content management software packages and moving toward cloud-based solutions. Cloud-based CMS systems allow users to save time and money on deployment, improve collaboration efforts between developers and content providers, and give users a future-proof solution to the issues of evolving technologies.

Magnolia CMS and Amazon Web Services (AWS) offers four methods by which you can have the optimal deployment setting and seamless integration with your existing systems while switching to a cloud content management solution.

Running Magnolia on a cloud platform is not all that different from running Magnolia on-premises. However, if you want to take advantage of the powerful features offered by AWS for auto-scaling and self-healing, you will have to automate the steps to bring up a new Magnolia instance and synchronize its content with other running Magnolia instances. There are a number of ways you can do this on AWS, each with advantages and disadvantages.

Option #1: standard CMS deployment

The standard CMS deployment option works with AWS, as well as other cloud providers. This option employs the standard Magnolia architecture, in which Magnolia public instances are registered with the Magnolia author instance. In this structure, the Magnolia author instance uses transactional publication to push new content from the content provider to the Magnolia public instances.

You have several different choices for synchronizing the content on a newly launched Magnolia public instance. You can restore a backup made with the Magnolia Backup module or you can restore a backup of the database and file system of the JCR repository. You can synchronize the content from the Magnolia author to the new public instance using the Magnolia Synchronization module. What you choose depends on how much content needs to be synchronized and how often it is changed or updated. You can even mix-and-match solutions: restore most of the content from a backup and update any content that has been changed since the backup was made with the Magnolia Synchronization module.

Advantages of standard Magnolia deployment

This option minimizes the number of Magnolia servers needed to deploy the CMS, which means that no standby Magnolia instances are required. Although bringing up a new Magnolia public instance will require some additional effort, there are a number of ways to deal with auto-scaling situations.

Drawbacks of standard Magnolia deployment

On the other hand, using an active Magnolia public instance serving traffic to make a backup can be risky. If the load on one of the public instances gets too high, running a backup on the instance may cause it to fail to respond. If that occurs, AWS auto-scaling may create another EC2 instance to replace it, which can lead to a series of failures that can become catastrophic.

Option #2: Magnolia deployment with content source target

The standard deployment option can lead to load issues when running a backup. However, the use of a content source target can alleviate these load issues. The content source target, a type of Magnolia public instance which is registered as a subscriber with the Magnolia author instance, only services requests made within the private subnet where it resides, thus reducing the chance that a backup will fail.

You have the same choices for synchronizing the content on a new Magnolia public instance as with Option #1. However with the content source target, you can now make backups reliably and safely without affecting Magnolia public instances serving your content.

Advantages of CMS deployment with content source target

The major advantage of this option is that it reduces or eliminates the odds that a backup will fail during auto-scaling. The use of a content source target ensures that the content backup process is separated from creating a new Magnolia instance. This robustness also eliminates the potential for a cascading failure, since the content source target is separate from the auto-scaling group for Magnolia public instances.

Drawbacks of CMS deployment with content source target

The use of a content source target to handle backups creates a single point of failure for auto-scaling. If for some reason the backup target were not available, the backup process would fail, as would the auto-scaling process. Also, the process of restoring a backup from the content source target with a large content repository could surpass the maximum execution time for an AWS Lambda function.

Option #3: CMS deployment with JCR clustering

This option uses JCR clustering to circumvent the issues involved with synching up the content on a new Magnolia public instance. When the Magnolia instances share a clustered JCR repository, all the content is already available in the shared JCR repository. A Magnolia deployment that applies JCR clustering employs a solitary “slave” Magnolia public instance that updates the repository and receives all publications from the Magnolia author, while all the other public instances share the same repository.

Using JCR clustering avoids the content synchronization problem entirely. All the content is already present in the shared JCR repository and is available to a newly launched Magnolia public instance.

Advantages of CMS deployment with JCR clustering

The use of the slave Magnolia public instance eliminates the need to register a new Magnolia public instance as a subscriber on the Magnolia author instance. Also, since only the slave Magnolia public instance (rather than all the public instances) receives the publications, the time required to publish content from the Magnolia author instance stays constant.

Drawbacks of CMS deployment with JCR clustering

Just as with the content source target option, the shared JCR repository leaves the system vulnerable to a single point of failure. If one Magnolia instance somehow corrupts the JCR repository, the rest of the Magnolia instances that share the repository will be adversely affected. For instance, the improper shutdown of a Magnolia public instance can potentially corrupt the JCR repository, which can affect every instance that shares that repository.

Option #4: CMS deployment with RabbitMQ activation

In a typical deployment, all subscribed public instances receive and save the published content from the Magnolia author instance. This “transactional activation” keeps all public subscribers in sync. However, more public instances mean more time will be needed to ensure a successful publication. RabbitMQ, an open source software that manages messaging queues, acts as an alternative to transactional activation. Magnolia uses RabbitMQ to deliver activation messages from the Magnolia author instance to the public subscribers.

Synchronizing content when using RabbitMQ activation can use the same techniques as the other options (restoring backups and synchronizing content with the Synchronization module), but with RabbitMQ you have another trick up your sleeve.

Activations with RabbitMQ are distributed as independent “deltas” over queues. RabbitMQ can store these activations in its queues waiting for new Magnolia public instances to be launched. Once a new Magnolia public instance is launched, it will receive any activation deltas waiting in its queue; there is no need to have a separate step of synchronizing any recently changed content with the Magnolia Synchronization module.

Advantages of CMS deployment with RabbitMQ activation

RabbitMQ activation makes the process of starting and stopping Magnolia public instances much easier, as it allows many Magnolia public instances to connect with a single Magnolia author without taking more time to publish content. Also, since the public instance is registered with RabbitMQ instead of the Magnolia author, the need to register the new public instance with the Magnolia author is removed.

Drawbacks of CMS deployment with RabbitMQ activation

While RabbitMQ can be used to create standby activation queues, these queues have a limit to how many messages they can hold before they must be cleared out. Since any publications waiting in the standby queues will also be contained in the backup, the standby activation queues should be flushed at the same time as the backup.

Deploy Magnolia CMS in the cloud

Download these free blueprints on how to deploy Magnolia CMS in Amazon Web Services (AWS) or other cloud services.

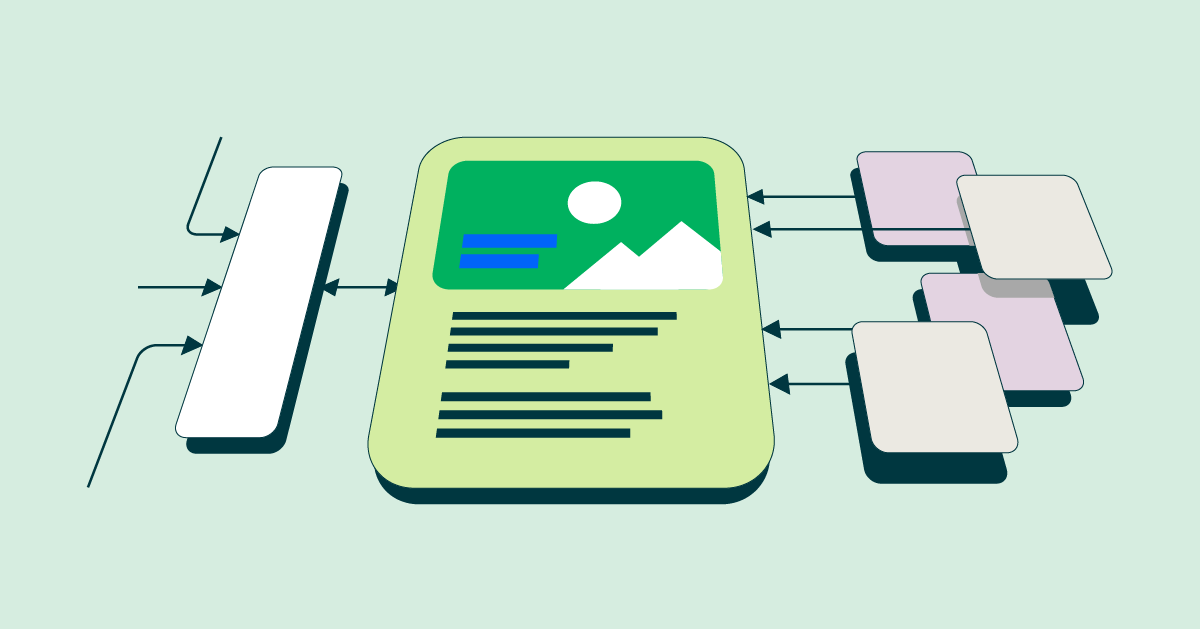

A CMS in the cloud: which option is right for you?

The best option for using Magnolia Enterprise CMS with a cloud provider depends on numerous variables. For instance, smaller content repositories could work well with a standard deployment or a deployment with a content source target, while those with more than five public instances that subscribe to a single Magnolia author instance may benefit from using JCR clustering or RabbitMQ activation.

.jpg)